Critical Affordance Analysis for Digital Methods: The Case of Gephi

The following chapter addresses the need for “tool criticism” approaches in research designs that build on “digital methods,” and thus medium-specific methods, using the example of network exploration and mapping software Gephi. In order to perform tool criticism as an ongoing, active and reflective, situated and systematic approach, I develop the lens of “critical affordance analysis.” Drawing from and expanding the notion of “research affordances” I explore and inquire into the “account(‑)ability” of digital methods tools, on the one hand understood as offering and generating documentation of these tools’ workings, and on the other hand, as supporting the comprehensibility of their workings. In conclusion, I advocate approaching the design of the affordances, or action possibilities, of such knowledge instruments in epistemological terms.

Introduction

Any editor that is beyond trivial needs an undo, for we are mere mortals. – bentwonk 2011

Gephi is an open-source software program, which facilitates the mapping, exploration, and manipulation of network data (Bastian, Heymann, and Jacomy 2009). It originated from a prototype devised to research web data from a social perspective, and subsequently has been designed as a consumer product (Heymann 2010). As such Gephi functions as a “digital methods” tool for the study of “natively digital objects and the methods that routinely make use of them” (Rogers 2013, 19). The Gephi website advertises the screen-based tool as “Photoshop™ for graphs” (Gephi.org 2018a), stressing its accessibility for non-expert users (e.g., Bastian et al. 2009; Heymann 2010). Gephi’s features suggest an apparent convenience: the tool affords the user to operate analytical principles implemented in the software program such as its layout algorithms, which spatialize the graphical representation of a network diagram with the push of just one button (Bastian et al. 2009).

Notably, the analogy between Photoshop and Gephi does not only highlight what the latter software program offers its users, but also what it lacks: Photoshop and similar software applications track the users’ actions within the application, thereby assembling a history of image modifications.[1] Based on this history users can undo and redo actions. Gephi, however, does not provide users with otherwise commonplace software features such as undo and redo commands, nor corresponding buttons, or a graphical manager at the user interface to trace back, reverse, or reproduce previous operations. “Where is undo in Gephi?” is therefore a recurring question amongst the Gephi community, one that frequently arises in the forums and discussion feeds hosted by the platform that address questions of software use and development.[2]

Amongst other matters, due to its relative ease of use as a research tool, Gephi has become a popular tool in scholarly research in the humanities and social sciences.[3] These researchers become “editors,” borrowing from the user quote above, who perceive and handle the data they investigate through Gephi’s graphical user interface (GUI). The lack of an undo button, however, implies missing opportunities to log this “data editing work” performed by researchers. Gephi’s design thus raises the question of how scholars account for the “interpretative acts” (Drucker 2014, 66) they conduct framed by the materiality of the software tool.

Gephi is an exemplary model for the role that software and digital tools currently play in critical data studies (CDS) disciplines such as the humanities and social sciences. CDS approaches focus on the study of data in the context of their inherently social and technical “making,” which should include the software programs and platforms involved in this process (e.g., Iliadis and Russo 2016). In this, CDS draws from the tradition of science and technology studies (STS) and more recent new media studies approaches such as software and algorithm studies—fields that inform my study of digital methods, tools, and related research practices. In this chapter, I inquire into Gephi’s “research affordances” (Weltevrede 2016). That is, the action possibilities Gephi’s “sociotechnical system” (Niederer and Van Dijck 2010) offers researchers to comprehend the research material and the methods implemented into the tool for data analysis. In doing so, I question the role of software tools in CDS practices and answer recent calls for “tool criticism” (e.g., Paßmann 2013; Rieder and Röhle 2017; Van Es, Wieringa, and Schäfer 2018).

This approach offers what prior studies miss. By means of a critical analysis of Gephi’s software affordances, I demonstrate how tool criticism can take shape as an interactional, situated and systematic analysis of the design of a digital tool.[4] Tool criticism in this approach becomes an integral part of the research process (e.g., Van Es et al. 2018), and thus an ongoing means to inquire into the tool’s ways of shaping the epistemic process, the production and dissemination of knowledge. Understanding Gephi’s affordances as sociotechnical mechanisms (e.g., Curinga 2014) implies a continuous analysis of the platform that fosters the tool. This engagement with the sociotechnical system stimulates “deeper involvement with the associated knowledge spaces to make sense of possibilities and limitations” (Rieder and Röhle 2017, 119).

I engage in this form of tool criticism focusing on the modes of “algorithmic account-ability” (Neyland 2016, 55 following Garfinkel 1967) Gephi either stimulates or constrains. “Account-ability” is understood in an ethnomethodological, process-oriented sense as stimulating the traceability and inspectability of the tool (Neyland 2016, 55). In conclusion, I will advocate thinking of research affordances in epistemological terms. In addition to explorations of the objects and sites of study, “epistemological affordances” should facilitate and encourage an understanding and evaluation of the software tool’s influence on the epistemic process. Moreover, I will make inferences concerning the required ethos of researchers, who, in the context of digital methods and knowledge tools, are not (anymore) simply tool users, but also developers.

Criticism of Algorithmic Knowledge Tools

The criticism of knowledge technologies has a long tradition in STS. Bruno Latour (1987) reveals in his seminal volume Science in Action how epistemic processes in the natural sciences are inherently constructed sociomaterially, a product of a series of interactions and negotiations between human actors, (technical) materials, and laboratory instruments. His observations led Latour to argue that, instead of focusing on “ready made science,” the production of knowledge should be studied “in the making” (Latour 1987, 4). Digital methods constitute at the same time a continuation of, and a breach with, STS: in their appreciation of grounded theory, digital methods are designed to “follow the medium,” studying, for example, online interactions through data from social media platforms (Rogers 2013, 24–27). Studying “the social” then implies fathoming the medium and the ways in which their intertwining “inscribes” into the research material (Venturini et al. 2018; Weltevrede 2016).

In order to approach the platforms and extract their data it is necessary to access, modify, and build software. Thus, as humanities scholars and social scientists we have moved from an observing to a participating role, in which our research thrives on algorithmic knowledge technologies. Bernhard Rieder and Theo Röhle (2017) aptly note that this dependency on tools, which are—due to their software qualities—subject to technical “black-boxing” (112), poses challenges to scholars who aim to not just access but assess the tools’ workings. They are challenged to account for the influence of in-built analytical principles on the epistemic process. For instance, Gephi “mobilizes” social network analysis building on mathematical principles of graph theory in combination with the study of social interaction (Rieder and Röhle 2017, 117–119).

Moreover, the Digital Methods Initiative, founded by Richard Rogers (University of Amsterdam) and the Paris-based SciencePo Médialab, which could be considered as the intellectual roots of Gephi, illustrate that the divide between the builder, user, and critic of knowledge technologies is no longer suitable. Stuart Geiger’s (2017) CDS investigation of Wikipedia as an epistemic infrastructure from the perspective of a long-term active contributor to the open-source platform underscores this vanishing divide in scholarly research. Based on his “ethnographic engagement” with Wikipedia’s “algorithmic system,” Geiger furthers previous empirical work interrogating the online encyclopedia’s “organizational culture” (2017) and its platform politics (e.g., Niederer and Van Dijck 2010). In doing so, Geiger advocates ongoing and situated forms of software tool criticism as part of the research process, and from a position within the epistemic infrastructure under scrutiny. Like Wikipedia, Gephi is defined by an open-source organizational culture, and as such its development is well-documented by and accessible for the online community. For example, the Gephi Wiki, the Forums, or Facebook group lend themselves to an empirical and situated engagement with the platform.

Geiger’s work also indicates that algorithmic tools add an interactional dimension to the study of epistemic processes “in action.” Approaching software in action should pose the question of how agency, understood as the capacity to take specific action, comes into being and is (re)distributed through software (Mackenzie 2006, 7–10). Agency, here, materializes through interaction between human actors and computational systems. This interaction is a constant struggle between what the software invites and allows for, how the user takes advantage of these action possibilities, and how the program responds in turn (Mackenzie 2006, 7). Human and algorithmic agencies become entangled through development and use settings, in such a way that the working process with software obscures who is acting upon whom or what, and according to whose ideas and intentions (Mackenzie 2006, 10). Specifically, GUI tools invoke an illusion of control over the algorithmic, and thus, (partially) automated processing of information. Yet, the “separation of instruction from execution” obstructs access to the underlying principles, properly caught in Wendy Chun’s (2011) notion of the “programmed vision” (11).

The aforementioned developers’ analogy between Photoshop and Gephi helps to expose the tensions that a GUI program’s materiality presents to researchers. Considering Photoshop, Lev Manovich claims “we need to understand media software – its genealogy (where it comes from), its anatomy (interfaces and operations), and its practical and theoretical effects” (2013, 124). Manovich offers a historically informed examination of software and its design. He demonstrates that software’s materiality amplifies and shifts cultural production and particular media practices such as the composition and editing of (moving) images. Manovich, however, does not cut critically through the GUI level. As Alexander Galloway, another software scholar, observed rightly, Manovich’s approach to software neglects to tackle the “problem of action” (Galloway 2012, 24). That is, in Manovich’s plea for a “software epistemology” (2013, 337–341) he does not foreground the executable layers of software—its “operations”—and the “politics,” or the visions of developers reified through the tools’ technical specifications.

My objective is to explore in which ways Gephi’s materiality, and the developers’ design choices resonating in it, mediates how users interact with the tool and, accordingly, how they perceive the data they analyze influenced by the tool’s workings. Expanding Manovich’s approach to application software, I inquire critically into Gephi’s design through its affordances, the ways in which the software presents the user with opportunities to perform particular actions (e.g., Curinga 2014). Moreover, I question the cultural conventions and social implications of these “possibilities for action” (Hutchby 2001, 444). I argue that such a critical analysis of “software affordances” alludes to both the dimensions “in action” signifies: the diachronic (Latourian) reading, and the literal meaning of experimenting with the tool, consciously tracing agencies and (methodological) bias in this interaction.

Software Tool Criticism Inquiring into Their Affordances

Affordance analysis is rooted in design theory, especially of human–computer interaction (HCI) (e.g., Gaver 1991). Affordances direct the attention to the investigation of not only the psychology (Norman 1988), but also the social meaning, or “politics,” of the design rationale behind (a range of) action possibilities a specific setting suggests with respect to a particular actor (Hutchby 2001). Sociologist Ian Hutchby (2001) explicates how investigating a setting as a possibility space for certain actions also invites for studying their discursive layers, and consequently, the questioning of the sociocultural conventions designed into an environment. In software interfaces an important starting point for affordance analysis is the provided “perceptible” information on affordances (Gaver 1991, 80). Matthew Curinga (2014) demonstrates how examining this interface language unravels the prioritization of certain action possibilities, for example through default settings. Curinga shows how such an analysis in combination with a reading of developers’ information, including online platforms’ terms and conditions, helps to disentangle and understand corporate software environments in situated manners (2014).

In this sense, I conceive of the perceivability of software affordances not only in terms of their explorability (e.g., Gaver 1991), but also their understandability, in scholarly contexts. The critical analysis of software’s sociomaterial mechanisms assists in considering it not merely as a tool, simply fulfilling functions assigned to it. Rather, a critical affordance analysis approaches software tools as “(scientific) inscription devices” (Latour 1987, 223–228; Venturini et al. 2018, 6), which are active in knowledge production and affect the shape of research outcomes. This implies scrutinizing the tool characteristics of software, interrogating its seemingly “ready-to-hand” qualities (e.g., Curinga 2014). In a similar fashion, Esther Weltevrede (2016) introduces the notion of “research affordances” to access the analytical opportunities digital technologies and platforms offer to “repurpose” them in digital methods, in order to make sense of the data and the medium.

In this study, I appropriate her term in a slightly different manner: as a means for research as well as (further) development of digital methods and tools. I understand research affordances as methodological instruments to evaluate and decide which action possibilities and related perceptible information to work on, improve, or add. In this I suggest looking at software affordances in epistemological terms, probing the politics of the knowledge community that fosters the tool. In particular, I look for “account-ability” in relation to software tools, understood as the ability to take account of a situation, the capability to account for one’s actions in this situation and, thus, to become and remain open to inquiry (Neyland 2016 in reference to Garfinkel 1967). Gephi, as a tool for “Visual Network Analysis” (Venturini, Jacomy, and Jensen 2019) with palpable outcomes, is a perfect showcase to examine what becomes visible—observable and reportable (e.g., Garfinkel 1967)—and what remains invisible, and therefore somehow inaccessible, and for which reasons. In searching for “digital methodology” in digital methods (e.g., Van Es et al. 2018, 26), I argue that a tool’s research affordances should assist in the exploration and understanding of, and reflection on, methods implemented into this tool.

I introduce “critical affordance analysis” as a manner of fleshing out Rieder and Röhle’s (2017, 114) suggestion of critical practice that oscillates between actual technical work and reflection. Drawing on their idea of “digital Bildung” (Rieder and Röhle 2017, 111, adapting David Berry’s concept; italics of source) I conceive of this tool criticism approach as an iterative exercise with the educative opportunity for researchers to acquire an interrogating attitude as part of their scholarly ethos. In the “functional and relational” sense in which Hutchby defines affordances (2001, 444), they offer a means to introspect and remain introspecting one’s own capacities, knowledge, and bias in relation to the applied tools, as well. Moreover, in terms of designing ways of interfacing with data, this approach helps to investigate in which ways methodological criticism is and could be reflected in the tool and the output it produces. Inspired by Johanna Drucker (2014), I use the angle of affordances to pose the question of how to render visible the process of interpretation in the exploration and communication of data: I examine if and how software interfaces can help to inspect and present matters of ambiguity and perspective, instead of providing a deceiving impression of objectivity and transparency.

Studying Gephi’s Research Affordances “In Action”: The Example of Les Miserables

I start inquiring into Gephi’s research affordances by exploring the tool’s way(s) of enabling the interfacing between scholar and tool. In order to have access to Gephi’s full spectrum of functionalities and experience its workings the tool requires data input. Investigating Gephi’s affordances, I focus on default specifications and other prominent features, such as the access the program offers to the “Coappearance Network of Characters in Les Miserables.” This data sample is also featured in the “Quick Start Guide,” one of the few tutorials branded an “Official Tutorial” by the Gephi platform (Gephi.org 2018b). I use resources such as the Quick Start Guide and other (academic) publications addressing Gephi’s design rationale to assess the discursive layers of the tool’s affordances.

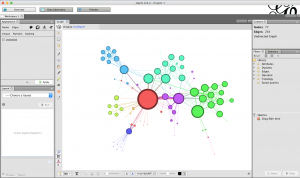

At start-up, Gephi opens a pop-up window that links to three different datasets prepared and prepackaged for users new to Gephi. First in the list is the Les Miserables set, which is the smallest of the three exercise samples, consisting of 77 nodes and 154 edges. The prominent place of the Les Miserables sample—on the screen and in the tutorial—encourages novice users to explore the tool through experimenting with the sample. The Les Miserables sample appeals to the imagination of the user: it is composed of nodes representing characters derived from the novel of the same name (Knuth 1993), linked by edges that express and merge their co-appearances at different stages of the plot in the graphical representation of “weighted” lines (see Figure 1). In an analytical sense, though, this “ready-made” is rather “inoperative” in its current state, as the following exploration will highlight.

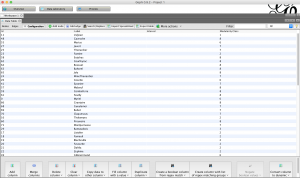

Upon opening the sample, the user is faced with the working Gephi interface offering three tabs: Gephi’s analytical strength resides at the Overview tab (Figure 1), which allows for spatializing and exploring the data. The Data Laboratory contains the dataset (Figure 2), including the metrics from preceding analyses (e.g., Modularity Class values). Eventually, Preview invites for tweaking the output of the (static) network diagram. Looking at the Les Miserables graph in Overview makes the user realize that it has been prepared (Figure 1). Finding documentation on this preparatory work, though, is demanding. The dataset itself contains a reference to its creator, Donald Knuth (1993), and the Quick Start Guide tutorial provides some clues on the construction work in Gephi. Consulting the tutorial, I assume that the ForceAtlas layout algorithm has been used to spatialize the graph.

Figures 1 and 2: Gephi’s “Overview” and “Data Laboratory” (nodes table) after opening the Les Miserables dataset

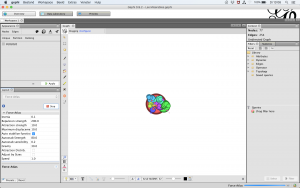

Setting the selected algorithm into motion by means of hitting the Run button results in an on-screen perceivable simulation of the spatialization process (resulting in the display shown in Figure 3). While ForceAtlas is “running” it is possible to modify its settings using the Layout panel, and consequently, manipulate this simulation, for instance, by adjusting the property values: playing around with ForceAtlas’s settings under its subheading Repulsion Strength, setting the property value to 10,000.0, returns a graphical display similar to the starting position (e.g., Figure 1). These adjustments resulted in an instantaneous expansion and discernible clustering of the graph.

Figure 3: The Les Miserables graph after adapting ForceAtlas’s settings

Through this impression of immediacy, Gephi’s Overview tab functions as an interface to the data, living up to Ben Shneiderman’s (1982) GUI design ideal of “direct manipulation.” It is questionable how valuable this data interface is, however, because the information on the accessed analytical affordances Gephi’s GUI presents to users is sparse: by hovering over the blue Information button featured in the Layout panel users get some general information on the “Quality” and the “Speed” realized in the spatialization algorithm. In addition to this information, the interface offers information on ForceAtlas’s properties if the user selects a property field from the panel (e.g., Figure 3, on the left). This information, however, is predominantly “instructive”: it advises how to adjust the algorithm’s properties to make the algorithm “behave” according to one’s wishes. Yet, formulating these wishes requires access to external documentation on the ForceAtlas series and its workings, such as official papers published by the Gephi core team (e.g., Jacomy et al., 2014). Having access to this form of external descriptions implies that users need to consult the Gephi platform.

To stress the importance of recording the conditions that led to the construction of a dataset, I use the example of the effects of community detection algorithm Modularity Class on the data (Blondel et al. 2008): executing this procedure, it is possible to change the default “resolution” of “1.0” applied in the calculation process, which affects the number of smaller communities the algorithm detects. Consequently, this change would impact the grouping of nodes, and the possibility of coloring nodes based on this classification.

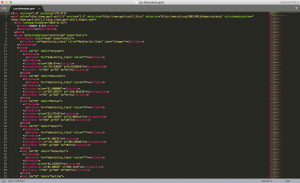

The same accounts for the parameters set for the used layout algorithm, for instance, ForceAtlas. While the Data Laboratory does not provide information on these analytical principles applied to the dataset, they leave at least some traces in the graph file, such as the positions of nodes (see Figure 4). Nevertheless, these positions do not offer clues on the adaptable properties of the layout algorithm, which, applied to the graph spatialization, influence the coordinates that delineate the node positions.

Figure 4: The “ready-made” Les Miserables dataset in Graph Exchange File format (GEXF)

According to the developers of the file format, in which the exercise sample has been made available, “[t]he key word is exchange…[of] at least the graph topology and data. … The goal is to represent a network’s elements: nodes, edges and data associated to them” (GEXF Working Group, 2009). It is disputable, however, if “exchange” in epistemological terms is possible, when this file format does not afford the exchange of information on interpretive decisions, and herein, analytical principles applied to the data of interest.

Accessing and assessing the graph spatialization implies understanding Gephi’s politics in terms of its scholarly focus on social science methods: the ForceAtlas layout algorithms have been specifically devised and fine-tuned for application in Gephi. They feature attraction forces between edges and repulsion forces between nodes, which results in a clustering based on degree (number of node connections) (Jacomy et al. 2014, 2). This clustering principle, termed “modularity” (Jacomy et al. 2014, 2), quantifies and visually focuses (purely) on the connectedness of actors based on their “social” relations.

In terming the exercise sample “ready-made,” I stress the ways in which this specific dataset is detached from its context of construction and, therefore, loses its suitability for analysis. Visiting the Data Laboratory, not just metaphorically, recalls Latour’s dualism between scientific knowledge as (unquestionable) product versus the study of “science in the making.” Lacking is, for instance, information on the chapters in which the included co-appearances are located. The sample does not account for the linearity or time span (of 15 years) of the plot development. Moreover, Gephi’s default spatialization algorithms do not feature analytical opportunities to use such qualitative information in the visual interpretation of the data. In a nutshell, there is no temporality or spatiality (meta)data derived from the novel and, therefore, no close reading and qualitative evaluation of connections is possible.

Donald Knuth (1993) prepared the original version of the sample as part of his technical work on “literate programming.” On his website, he states that the sample can be used “for benchmark tests of competing methods.” Also, in Gephi the sample is utilized as exercise material, enabling the user to put the tool into operation. The implementation of this sample suggests an epistemic equalization of networks of fictional characters and “real-world” social networks, however, which constitute the more common type of dataset explored in Gephi. Therefore, it is important to note that a (visual) network analysis of the Les Miserables data sample only makes sense in comparison with other “fictional social networks.”

Thus, with regard to account-ability, the accessibility of the sample and, through this exercise material, the tool’s executable specifications pose a “Zuhandenheit problem”: as “we use these digital tools without necessarily reflecting on them” (Galloway 2014, 126). I argue that design decisions in the development and implementation of software tools used in CDS approaches need to consider the question of the practicability of tools. That is, the Gephi platform should present research affordances to likewise experience and evaluate the inscriptive qualities of the tool. Such affordances, then, should include comprehensive documentation on the previous tool preparation work and its circumstances. In part such affordances are already implemented, for example, by means of access to official tutorials, technical papers, and the logging of the tool development. Nevertheless, tutorials such as the Quick Start Guide were written for an older version of the tool and are therefore somewhat outdated. Besides, the tool preparation work, and within that, the implemented methodological principles, could be featured more explicitly, for instance, as part of the GUI. Realizing algorithmic account-ability in software tools, then, means designing research affordances that facilitate and stimulate the traceability and inspectability of the work performed with and on the software tool.

Discussion: Account-ability Through Software Affordances?

Returning to the point of departure of this article, the question arises of what an undo function would add in epistemological terms. From the perspective of HCI design, reversibility was defined as an important prerequisite in order to encourage users to experiment with the tool and, in doing so, explore the applied set of graphical representations and metaphors and the workings of the underlying system (Shneiderman 2003 [1983], 493). The question is, though, whether Gephi’s set of graphical representations and metaphors is comprehensible (just) through manipulating data at the interface level. Moreover, reversibility and reproducibility in the form of social network analysis Gephi features does not require an undo, since settings can be changed (back) in the Layout panel. As Gephi’s network spatialization is a (mere) simulation of (social) interaction, such a tweaking of settings results in a similar graphical representation (and corresponding node positioning).

More importantly, reversibility in software is coupled with the automatic recording of performed actions and applied settings. Thus, in epistemological terms, the scarcity of documentation has a more profound impact than the lack of an undo function. Such documentation can support the “reader” of a network graph in disclosing the transformation Gephi’s Preview affords from a manipulatable spatialization to a “static still” of the research process. To turn to Latour’s vocabulary, documentation on the making of a network graph, and the scholarly conventions and methodological bias that framed this process, adds (some degree of) mutability to the (communication of) research results (1987, 223–228).

In general, there is more work needed on (the design of) data interfaces, related questions of account(-)ability in/through them, and the (im)possibility of visual evidence. With respect to “visualizing interpretation” (Drucker 2014, 64–137), Gephi’s Preview does not (even) enable the user to create and export a legend. The development of software tools should address issues beyond questions of “user experience” (e.g., Ricci and Jacomy 2015). Delivering a pragmatic solution on the road to “account-ability by design,” I contributed to a “fieldnotes plug-in” for Gephi (Wieringa et al. 2019). This plug-in alludes to the ethnomethodological tradition of taking fieldnotes by means of generating a time-stamped snapshot of the state of the network graph, simultaneously listing the applied settings in a text file and exporting a graph file.

Engaging in tool criticism in such a productive way, however, is an ongoing process. Becoming and staying aware of the platform politics, as well as a tool’s (methodological) possibilities and constraints, means approaching a dynamic and therefore changing sociotechnical environment. A recent blog post by the core team of Gephi developers, for instance, exposes the open source tool’s dependency on the evolution of Java, the programming language the software is written in (Jacomy 2018). Furthermore, these sociotechnical dynamics are exemplified by the introduction of ForceAtlas 2, the successor to ForceAtlas (Jacomy et al. 2014), which marked the gradual optimization of the tool for the social sciences.

Conclusion

In this article I presented critical affordance analysis as an ongoing, situated and systematic means of inquiry into a digital tool’s research affordances and as a means to practice “tool criticism.” Furthering Manovich’s idea, I state that a “software epistemology” interrogates what knowledge is and becomes in relation to software, and how we could keep asking these questions. The critical analysis of the research affordances of software tools in CDS practices, in this sense, stimulates digital Bildung. The performed analysis revealed that more research is needed on how to critically position the performed work in research with, and development of, knowledge technologies. This calls for a design rationale that stimulates reflection on the epistemic role of software tools, emphasizing the partiality of one’s interpretative work with the tool. I aim to encourage other scholars to become active in the Gephi community and other sociotechnical epistemic infrastructures. Acting in this context as a “software ethnographer” also asks for pragmatic approaches such as contributing to the (further) development of tools and interrogating their “epistemological affordances.”

References

Bastian, Mathieu, Sebastien Heymann, and Mathieu Jacomy. 2009. “Gephi: An Open Source Software for Exploring and Manipulating Networks.” In Proceedings of the Third International AAAI Conference on Weblogs and Social Media, 8: 361–362. San Jose, CA: AAAI Press. https://www.aaai.org/ocs/index.php/ICWSM/09/paper/view/154.

bentwonk (2011). Undo, please. In Gephi forums. QA: Ideas, Requests and Feedback (August 29). http://forum-gephi.org/viewtopic.php?f=7&t=1383.

Blondel, Vincent D., Jean-Loup Guillaume, Renaud Lambiotte, and Etienne Lefebvre. 2008. “Fast Unfolding of Communities in Large Networks.” Journal of Statistical Mechanics: Theory and Experiment 2008 (10): P10008. doi: https://doi.org/10.1088/1742-5468/2008/10/P10008.

Chun, Wendy Hui Kyong. 2011. Programmed Visions: Software and Memory. Cambridge, MA, London: MIT Press.

Curinga, Matthew X. 2014. “Critical Analysis of Interactive Media with Software Affordances.” First Monday 19 (9). https://dx.doi.org/10.5210/fm.v19i9.4757.

Drucker, Johanna. 2014. Graphesis: Visual Forms of Knowledge Production. Cambridge, MA: Harvard University Press.

Galloway, Alexander R. 2012. The Interface Effect. Cambridge, UK & Malden, MA: Polity.

———. 2014. “The Cybernetic Hypothesis.” Differences 25 (1): 107–131. https://doi.org/10.1215/10407391-2420021.

Garfinkel, Harold. 1967. Studies in Ethnomethodology. Englewood Cliffs: Prentice-Hall.

Gaver, William W. 1991. “Technology Affordances.” In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, 79–84. New York: ACM. https://doi.org/10.1145/108844.108856.

Geiger, R. Stuart. 2017. “Beyond Opening up the Black Box: Investigating the Role of Algorithmic Systems in Wikipedian Organizational Culture.” Big Data & Society 4 (2): 2053951717730735. https://doi.org/10.1177/2053951717730735.

Gephi.org (2018a). Gephi. The Open Graph Viz Platform. https://gephi.org/.

Gephi.org (2018b). Learn how to use Gephi. Gephi. Learn. https://gephi.org/users/.

GEXF Working Group. 2009. GEXF File Format. https://gephi.org/gexf/format/.

Heymann, Sebastien. 2010. “Gephi initiator interview: how ‘Semiotics matter’.” Gephi Blog, February 1. https://gephi.wordpress.com/2010/02/01/gephi-initiator-interview-how-semiotics-matter/.

Hutchby, Ian. 2001. “Technologies, Texts and Affordances”. Sociology 35 (2): 441–456. https://doi.org/10.1177/S0038038501000219.

Iliadis, Andrew, and Federica Russo. 2016. “Critical Data Studies: An Introduction.” Big Data & Society 3 (2): 205395171667423. Accessed May 1, 2018. https://doi.org/10.1177/2053951716674238.

Jacomy, Mathieu. 2018. “Is Gephi obsolete? Situation and perspectives.” Gephi Blog, November 1. https://gephi.wordpress.com/2018/11/01/is-gephi-obsolete-situation-and-perspectives/.

Jacomy, Mathieu, Tommaso Venturini, Sebastien Heymann, and Mathieu Bastian. 2014. “ForceAtlas2, a Continuous Graph Layout Algorithm for Handy Network Visualization Designed for the Gephi Software.” PLOS ONE 9 (6): e98679. https://doi.org/10.1371/journal.pone.0098679.

Knuth, Donald E. 1993. The Stanford GraphBase: A Platform for Combinatorial Computing. New York: ACM Press.

Latour, Bruno. 1987. Science in Action: How to Follow Scientists and Engineers Through Society. Cambridge, MA: Harvard University Press.

Mackenzie, Adrian. 2006. Cutting Code: Software and Sociality. New York: Peter Lang.

Manovich, Lev. 2013. Software Takes Command. New York, London: Bloomsbury.

Neyland, Daniel. 2016. “Bearing Account-Able Witness to the Ethical Algorithmic System.” Science, Technology, & Human Values 41 (1): 50–76. https://doi.org/10.1177/0162243915598056.

Niederer, Sabine, and José van Dijck. 2010. “Wisdom of the Crowd or Technicity of Content? Wikipedia as a Sociotechnical System.” New Media & Society 12 (8): 1368–1387. https://doi.org/10.1177/1461444810365297.

Norman, Donald A. 1988. The Psychology of Everyday Things. New York: Basic Books.

Paßmann, Johannes. 2013. “Forschungsmedien Erforschen. Zur Praxis Mit Der Daten-Mapping-Software Gephi.” Navigationen. Zeitschrift Für Medien- Und Kulturwissenschaften: Vom Feld Zum Labor Und Zurück 13 (2): 113–130.

Ricci, Donato, and Mathieu Jacomy. 2015. “Improving the Gephi User Experience.” Gephi Blog, June, 2. https://gephi.wordpress.com/2015/06/02/improving-the-gephi-user-experience/.

Rieder, Bernhard, and Theo Röhle. 2017. “Digital Methods: From Challenges to Bildung.” In The Datafied Society: Studying Culture Through Data, edited by Mirko Tobias Schäfer and Karin van Es, 109–124. Amsterdam: Amsterdam University Press.

Rogers, Richard. 2013. Digital Methods. Cambridge, MA: MIT Press.

Shneiderman, Ben. 1982. “The Future of Interactive Systems and the Emergence of Direct Manipulation.” Behaviour and Information Technology 1 (3): 237–256. https://doi.org/10.1080/01449298208914450.

Shneiderman, Ben. (2003 [1983]). “Direct Manipulation: A Step beyond Programming Languages.” In The New Media Reader, edited by Noah Wardrip-Fruin and Nick Montfort, 485–498. Cambridge, MA: MIT Press.

Van Es, Karin, Maranke Wieringa, and Mirko Tobias Schäfer (2018). “Tool Criticism: From Digital Methods to Digital Methodology.” In Proceedings of the 2nd International Conference on Web Studies, WS.2 2018 (October 3–5): 24–27. Paris: ACM Press. https://doi.org/10.1145/3240431.3240436.

Venturini, Tommaso, Mathieu Jacomy, and Jensen, Pablo. 2019. “What Do We See When We Look at Networks. An Introduction to Visual Network Analysis and Force-Directed Layouts”. SSRN (April 26). http://dx.doi.org/10.2139/ssrn.3378438.

Venturini, Tommaso, Liliana Bounegru, Jonathan Gray, and Richard Rogers. 2018. “A Reality Check (List) for Digital Methods.” New Media & Society 20 (11): 4195–4217. https://doi.org/10.1177/1461444818769236.

Weltevrede, Esther. 2016. “Repurposing Digital Methods: The Research Affordances of Platforms and Engines.” Dissertation, Amsterdam: University of Amsterdam. https://hdl.handle.net/11245/1.505660.

Wieringa, Maranke, Daniela van Geenen, Karin Van Es, and Jelmer van Nuss. 2019. The Field Notes Plugin: Making Network Visualization in Gephi Accountable. In Good Data, edited by A. Daly, K. Devitt and M. Mann. Amsterdam: Institute of Network Cultures. http://networkcultures.org/wp-content/uploads/2019/01/Good_Data.pdf.

Endnotes

[1] See for more information on how this is realized in recent versions of Photoshop: https://helpx.adobe.com/photoshop/using/undo-history.html (accessed November 1, 2019).

[2] For example, see the following posts and feeds in the Gephi forums and on the Gephi Wiki, where the introductory quote is also derived from: http://forum-gephi.org/viewtopic.php?f=7&t=1383 and https://github.com/gephi/gephi/issues/1175 (accessed November 1, 2019).

[3] Since the launch of the first stable version in 2008, the application software is often employed in social and cultural investigations, specifically (social) media and web analysis. Gephi has been referenced by more than 5,000 academic papers (Bastian et al. 2009 in Google Scholar, November 1, 2019), including in studies that discuss online communities, media practices, and infrastructures.

[4] Van Es et al. (2018) note that “affordance theory has already provoked us to think not about the tool alone, but the relationship between tool and user” (26). However, this bracketed sentence is not followed by concrete elaborations on how to practice tool criticism. Bernhard Rieder and Theo Röhle (2017) use the example of Gephi to argue for the need of a critical engagement with the tool, but do not show how such a reflective practice could be performed. Paßmann’s (2013) ethnomethodological effort to examine Gephi’s layout algorithms is not informed by a situated study of the sociotechnical system.